The phone rings. You see your daughter’s name on the caller ID. You answer. She’s sobbing. She says she’s been in a car accident. She needs money — right now. Cash. Gift cards. Wire transfer. Don’t call the police.

Then a man comes on the line.

That call happened to a woman in Missoula, Montana in May 2026. Her daughter’s voice was so convincing — “I know her scared cry,” she said — that she nearly wired thousands of dollars before frantically reaching her daughter’s workplace and finding her safe at her desk.

That’s what AI voice cloning scams do. They clone the most trusted voice in your world. Then they use it against you.

This isn’t science fiction. The FTC logged over 250,000 complaints about AI voice scams in Q1 2026 alone. And most victims never report it at all.

Table of Contents

The Scale of AI Voice Cloning Scams in 2026

The numbers aren’t alarming. They’re terrifying.

⚠️ ALERT: Voice phishing (vishing) attacks surged 442% in 2025 due to AI-driven techniques. Deepfake-enabled vishing attacks surged 1,600% in Q1 2025 versus Q4 2024 alone. AI scams overall spiked 1,210% in a single year.

Imposter scams — where someone pretends to be a family member — are now the most reported fraud complaint to the FTC. Cases jumped roughly 19% to 1 million in 2025. Losses climbed past $3.5 billion.

AI voice cloning scams have already cost elderly Americans over $2.3 billion in 2026 alone, according to FBI data. Global losses are projected to hit $8 billion by year-end.

And here’s the part that makes this so hard to stop: only 15% of victims report it. Most suffer in silence, ashamed they fell for it. Which means the real numbers are catastrophically higher.

1 in 10 Americans has now experienced an AI voice clone scam directly or through someone in their household. 77% of those victims lost money.

This is no longer a fringe threat. This is the defining consumer fraud of our era.

How AI Voice Cloning Actually Works

The technology is brutally simple. And that’s what makes it so dangerous.

Step 1 — Voice harvesting. A scammer finds a TikTok, Instagram Reel, Facebook video, or YouTube clip with your family member’s voice. Could be 3 seconds. That’s enough.

Step 2 — Voice cloning. The audio is uploaded to a free or near-free AI voice cloning tool. The model trains on the sample and outputs a synthetic voice that replicates pitch, cadence, accent, and emotional inflection.

Step 3 — Script injection. The scammer types a distress script: “Mom, I need help — I’ve been in an accident.” The AI renders it in your child’s cloned voice. Background noise is added for realism.

Step 4 — The call. A human accomplice dials you. The cloned voice plays. You hear your child. Your adrenaline fires. Your logical brain shuts down.

Here’s the visual breakdown of how fast this happens:

[Public Social Media Post]

↓

[3-30 seconds of audio extracted]

↓

[AI voice cloning tool processes sample]

↓

[Scammer types any script they want]

↓

[Your loved one's voice says it]

↓

[Phone rings. Your name on caller ID. Panic sets in.]

↓

[Wire transfer / gift card / crypto ATM]🔴 WARNING: The 2026 International AI Safety Report confirmed these tools are free, require zero technical expertise, and can be used anonymously. The total cost to run this scam? Approximately $0 and 30 minutes.

Voice cloning has now crossed what Fortune called the “indistinguishable threshold.” Human listeners can no longer reliably tell cloned voices from real ones. That’s not a warning anymore. That’s the current reality.

The Most Dangerous AI Voice Cloning Scam Types

Not all AI voice cloning scams look the same. Knowing the playbook helps you recognize the attack before it lands.

🚨 The Grandparent Scam (Evolved)

The oldest scam in the book, now supercharged. A grandparent receives a call in their actual grandchild’s cloned voice: “Grandma, I’ve been arrested. I need bail money. Don’t tell Mom.”

The voice isn’t similar. It’s identical.

Adults aged 60+ account for 43% of total AI fraud losses despite being in fewer incidents. The average loss per incident for seniors is devastating — often wiping out retirement savings.

🚨 The Virtual Kidnapping Scam

Your child’s voice appears to say they’ve been taken. A man comes on and demands ransom. A 2025 FBI warning highlighted cases where demanded amounts ranged from $2,500 to $15,000, paid in Bitcoin, gift cards, or wire transfers before the victim could verify their child was safe at school.

🚨 The Accident Emergency Scam

“Mom, I was in a crash. I need you to send money for the hospital / towing / legal fees. Don’t call 911.”

The urgency is the weapon. They need you panicked and moving fast.

🚨 The Jury Duty / IRS Impersonator Scam

AI voice cloning doesn’t just target family voices. Scammers now clone government officials, police officers, and IRS agents with authoritative cloned voices to demand immediate payment for fake warrants and missed jury duty.

🚨 The “Scam-as-a-Service” Industrial Operation

This isn’t lone wolves anymore. Criminal networks sell packaged fraud kits — AI voice cloning, deepfake video, fake website generators, and target lists — for as little as $60/month. One person can now run a professional scam operation that previously required a full criminal organization.

| Scam Type | Target | Demanded Payment |

|---|---|---|

| Grandparent Scam | Seniors 60+ | Gift cards, wire transfer |

| Virtual Kidnapping | Parents | Crypto ATM, wire transfer |

| Accident Emergency | Family members | Zelle, PayPal, gift cards |

| Government Impersonation | Anyone | Wire transfer, Bitcoin |

| Executive Fraud (BEC) | Businesses | Wire transfer |

⚠️ ALERT: Urgency-based requests appear in over 70% of AI voice scams. Spoofed caller ID — showing your child’s actual name and number — appears in more than 80% of voice phishing attacks. If a call feels rushed and demands money, that pressure IS the scam.

Red Flags: How to Detect an AI Voice Cloning Call

Audio detection is increasingly unreliable in 2026. But there are still tells — if you know what to listen for.

Listen for these signs of a cloned voice:

- Unnatural emotional flatness — Real panic has rhythm. AI panic sounds metronomic.

- Audio artifacts — Slight clicks, unnatural breath sounds, or micro-pauses in odd places.

- Hesitation on personal questions — Ask something only the real person would know. AI clones can’t guess private memories.

- “Metronome” speech quality — Real voices accelerate and decelerate. AI voices maintain suspiciously uniform pacing.

- Refusal to video call — Any refusal to switch to FaceTime or video should trigger maximum suspicion.

Also watch for these behavioral red flags:

- Demands for gift cards, wire transfers, crypto ATMs, or Zelle — never cash or credit cards

- “Don’t call the police” or “Don’t tell anyone”

- “Act now or something terrible will happen”

- Caller ID shows a family member’s name but the situation makes no sense

- They know your name, your family member’s name, your city — but nothing private

🔴 WARNING: Scammers pull names, locations, and family relationships from your public social media profiles before calling. This is why the call sounds specific and credible. They’ve done their homework.

The hard truth: even trained experts get fooled. Your defense cannot rely on listening carefully. It requires a system.

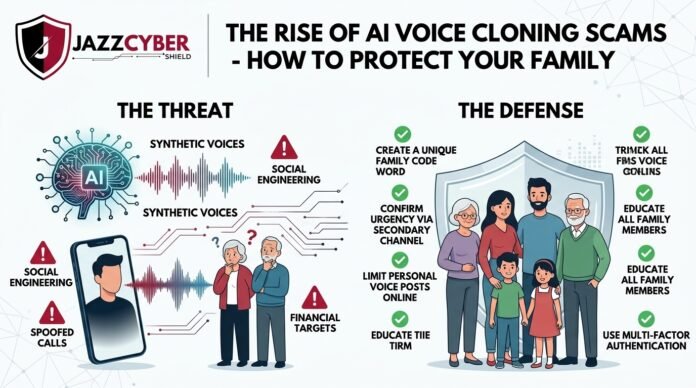

Your Family’s #1 Defense: The AI Voice Cloning Safe Word System

The FTC, FBI, McAfee, and the BBB all agree on the single most effective low-tech defense against AI voice cloning scams: the family safe word.

This is a secret code phrase known only to your immediate family. It is never posted online. It is never shared with anyone outside the family. It is the one thing an AI clone cannot guess — because it was never trained on it.

How to set up your family safe word system:

- Choose a nonsensical, random phrase. Think “Purple Cactus” or “Midnight Protocol.” Not a word associated with your life. Not a pet’s name or a street name that could be guessed.

- Tell every family member today — spouse, kids, parents, siblings. Cover everyone a scammer might impersonate.

- Establish the rule: Any emergency call requesting money MUST include the safe word before any action is taken.

- Practice it. Teens roll their eyes. Make them say it back to you anyway.

- Update it every year — or immediately if you think it may have been compromised.

What to do when you get a suspicious call:

Step 1: Stay calm. Don't hang up.

Step 2: Immediately ask: "What's the safe word?"

Step 3: If they can't answer → Hang up immediately.

Step 4: Call your family member directly on their saved number.

Step 5: If no answer → call 911 and report to FTC at ReportFraud.ftc.gov⚠️ ALERT: A scammer cannot replicate private knowledge regardless of how well they clone the voice. Your safe word breaks the entire attack in one question.

If you want enterprise-level network protection to block phishing calls, spoofed numbers, and malicious traffic at the router level, consider a professional firewall solution — check out our firewall security hardware (opens in new tab) to protect your home network from the infrastructure these scams operate on.

Social Media: Why Your Kids’ Videos Are a Gold Mine for Scammers

Here’s the uncomfortable truth most parents don’t want to hear: every video your child posts publicly is a voice training sample.

A 3-second audio clip is all it takes. Every TikTok, Instagram Reel, YouTube video, and Facebook video is a potential source. Over 53% of people share voice recordings online at least once per week. Social media users who share voice or video content are 3x more likely to be targeted by AI voice cloning scams.

What scammers harvest from your family’s profiles before calling:

- Your child’s voice (for cloning)

- Your name and phone number (for caller ID spoofing)

- Your city and neighborhood

- Your child’s school, friends, and activities

- Your family relationships and dynamics

- Recent life events they can reference to sound credible

The scam often starts with a hacked account. Criminals break into someone’s Instagram or Facebook through unsafe third-party app permissions. Once inside, they steal voice samples AND use the real account to deflect verification attempts — meaning if you try to message your daughter through Instagram to verify she’s safe, the scammer answers from her real account using her cloned voice.

Practical social media steps to reduce your family’s exposure:

- Set all family accounts to private

- Audit which third-party apps have “Sign in with Facebook/Google” access — revoke any you don’t recognize

- Teach teens not to post long solo videos publicly

- Remove your phone number from all public social media profiles

- Be careful what you post about your children’s schedules, schools, and routines

This connects directly to your home network security. If your router is poorly configured, scammers and hackers may access devices on your network to harvest data. Learn how to harden your setup in our guide on router settings you must change.

What the Law Says — And What It Doesn’t Cover Yet

Congress is finally paying attention — but regulation is chasing a runaway train.

As of April 2026, both the Senate Commerce Committee and House Energy and Commerce Committee announced hearings focused specifically on AI voice fraud. Two major legislative efforts are in progress:

The Voice Cloning Protection Act — Would require explicit consent before any person’s voice can be used to train AI models or generate synthetic speech. Similar to existing biometric laws in Illinois and Texas.

The DEEPFAKES Accountability Act — Would require digital watermarking of AI-generated audio and video, making synthetic media identifiable to detection tools.

FTC authority expansion — Proposals to give the FTC explicit authority to regulate deceptive AI-generated communications under existing consumer protection mandates.

The FTC has already warned that imposter scams are their top fraud category. You can report a voice cloning scam at ReportFraud.ftc.gov (opens in new tab). CISA has also published guidance on synthetic media threats — see CISA’s AI security resources (opens in new tab).

For compliance frameworks on AI fraud for businesses, the NIST AI Risk Management Framework (opens in new tab) provides current guidance.

The reality: the technology is advancing faster than legislation. The tools exist today. The laws protecting you are still being written. You cannot wait for the government to solve this.

How to Protect Your Family Right Now

Here are the actionable steps every family needs to take this week — not next month. This week.

- Create your family safe word today. Call a family meeting tonight. Pick a phrase. Make sure everyone knows it. This is the single most important thing you can do.

- Set all social media accounts to private. Especially your children’s accounts. Review privacy settings on Facebook, Instagram, TikTok, and YouTube.

- Create a verification ritual. Agree as a family: any urgent call requesting money gets hung up on, and the person is called back directly at their saved number before any action is taken.

- Educate your parents and grandparents. Seniors are the highest-loss demographic. Call them. Walk them through the safe word system. Make sure they have your number saved correctly.

- Never send gift cards. No legitimate emergency — not bail, not hospital bills, not IRS debt — is ever resolved with iTunes gift cards or Google Play cards. That demand is 100% a scam signal.

- Trust the verification call over your emotions. If you get a distressing call, hang up and immediately call your family member’s saved number. If they answer normally, the call was a scam. If they don’t answer, call 911.

- Use strong network security at home. Scammers research targets by compromising home networks and devices. Secure your Wi-Fi, update your router firmware, and separate your IoT devices on a dedicated VLAN. Our guide on VLAN setup for home networks shows you exactly how.

- Report every suspicious call. Report to the FTC at ReportFraud.ftc.gov, your state Attorney General’s consumer protection office, and the Internet Crime Complaint Center at IC3.gov.

- Check if AI detection tools help. McAfee’s Deepfake Detector claims 96% accuracy at flagging synthetic audio. Hiya’s Deepfake Voice Detector runs as a mobile guard on incoming calls. These aren’t perfect, but they add a layer.

- Audit your home network security. A properly secured network with a business-grade SonicWall or WatchGuard firewall (opens in new tab) blocks command-and-control infrastructure used by scam operations — protecting your family at the network level, not just the device level.

✅ Quick Reference Checklist

Print this out. Put it on your fridge. Send it to your parents.

AI VOICE CLONING SCAM PROTECTION CHECKLIST

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

FAMILY SETUP

[ ] Family safe word created and shared with all members

[ ] Verification rule established (hang up + call back directly)

[ ] Grandparents and elderly family members briefed

[ ] Everyone has each other's numbers saved correctly

SOCIAL MEDIA LOCKDOWN

[ ] All family accounts set to private

[ ] Children's TikTok, Instagram, YouTube set to private

[ ] Phone numbers removed from public profiles

[ ] Third-party app permissions audited and revoked

WHEN A SUSPICIOUS CALL COMES IN

[ ] Ask for the safe word immediately

[ ] Do NOT send gift cards, wire transfers, or crypto

[ ] Hang up and call the person at their saved number

[ ] Call 911 if you cannot verify their safety

[ ] Report to FTC at ReportFraud.ftc.gov

HOME NETWORK SECURITY

[ ] Router firmware updated

[ ] Strong, unique Wi-Fi password set

[ ] IoT devices on separate VLAN

[ ] Consider business-grade firewall for home office useFrequently Asked Questions

Q: How much audio does a scammer need to clone someone’s voice?

A: As little as 3 seconds of clean audio is enough for modern AI voice cloning tools to create a convincing synthetic voice. Longer samples — 15 to 30 seconds — produce higher-quality clones, but the technology works frighteningly well even on short clips pulled from social media.

Q: Can I tell the difference between a cloned voice and the real one?

A: Not reliably, and that’s the core problem. Fortune’s reporting in late 2025 confirmed that voice cloning has crossed the “indistinguishable threshold.” Even cybersecurity experts struggle. Your defense cannot be “listen very carefully” — it must be a system like the safe word.

Q: What should I do if I already sent money?

A: Contact your bank immediately to attempt a reversal. If you used gift cards, call the card issuer — some have fraud recovery programs. File a report with the FTC at ReportFraud.ftc.gov, your local police, and the FBI’s IC3.gov. Act fast — every minute counts with wire transfers.

Q: Are kids or elderly people more at risk?

A: Both are high-risk, but for different reasons. Adults 60 and older account for 43% of total losses — they tend to have more savings and may be less familiar with the technology. Young adults 18–29 report the highest exposure rate to AI scams. Kids’ voices are most commonly harvested for impersonation because scammers call their parents.

Q: Is this illegal? Are scammers getting caught?

A: Yes, it’s illegal. Impersonation fraud, wire fraud, and extortion are all prosecutable. But most operations are run from overseas — Eastern Europe and Southeast Asia account for an estimated 70% of attacks — making prosecution extremely difficult. Congress is working on new AI-specific legislation, but enforcement gaps remain wide. Your best protection is prevention, not prosecution.

Conclusion

AI voice cloning scams are the fastest-growing fraud category in 2026. The technology costs nothing. The tools are free. The attacks are industrialized. And your family’s voice samples are already publicly available on the internet.

The good news: the defense is simple. A family safe word costs nothing and defeats the attack completely. No amount of AI sophistication can replicate a private code only your family knows. Start there. Start today.

Share this article with your parents, your kids, your coworkers. This is the scam that parents are sharing widely — because it’s already hit someone they know. The Missoula mother who heard her daughter’s “scared cry” nearly wired thousands of dollars before a stroke of luck saved her. Not everyone gets that luck. Build your system before you need it.

And if you want to add a genuine layer of network security protection — blocking spoofed numbers, phishing infrastructure, and malicious domains before they reach your devices — explore our security solutions at Jazz Cyber Shield (opens in new tab).

Related Reading

- Hidden Dangers of Public Wi-Fi in 2026 — What You Need to Know

- How Hackers Break Into Security Cameras

- Router Settings You Must Change Right Now

- VLAN for Home Network 2026 — Full Setup Guide

- Why Small Businesses Close After a Cyberattack